Utherverse Metaverse Launching a Next-Generation Web3 Closed Beta

Utherverse, a major metaverse platform, is set to officially launch a closed beta version of its next-generation metaverse later this month. Utherverse MetaverseThe new metaverse platform has been described as…

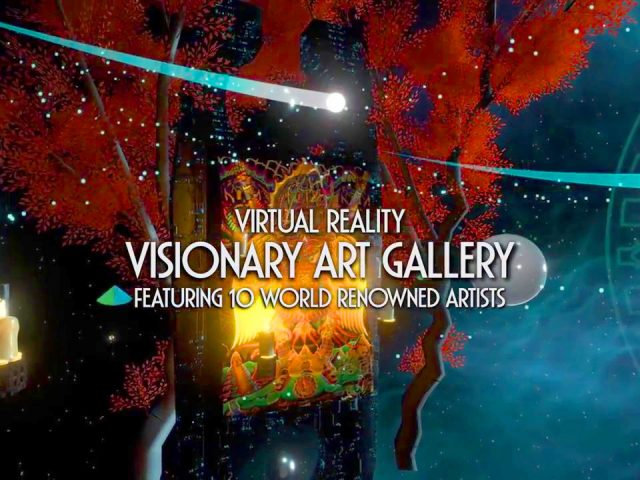

Galactic Gallery: Explore the World of Immersive Visionary Art

What does VR art really look and feel like? Most of us have probably experienced virtual reality media streaming and “perceptible” VR applications and we might have a rough idea…

Marvel Powers United VR Announced for Oculus Rift

Marvel Powers United VR lets you team up with three of your friends with an Oculus Rift headset to fight the forces of evil in real time as Marvel superheroes.…

List of Space Exploration VR Experiences

Walk in space without leaving your seat, thanks to space exploration VR experiences. Photo: Rewind The Universe is unfathomably massive – it's so vast, that so far mankind has barely…

Hollow Studios & Cedar Fair Make 4D VR Experience

Virtual Reality and horror fans alike will be thrilled with Hollow Studios’ announcement. The media production company partnered with Cedar Fair Entertainment to open FEARVR: 5150, a 4D VR horror…

3DSunshine Lets You Play God in Virtual Reality

Both gamers and no gamers have felt the real-life desire of being God for a second to be able to change things and do whatever they want. Gamers, for instance,…

Star Wars Enters the World of Virtual Reality

At the London Star Wars Celebration, Lucasfilm announced that it will collaborate with David Goyer (screenwriter for Batman Vs Superman: Dawn of Justice, Christopher Nolan’s Batman trilogy, and Man of…

Polynomial 2 Mesmerizes You In Virtual Reality

Sometimes the most beautiful and entertaining video games are not the major millionaire productions from well-established gaming companies. As there is beauty in the small things of life, there is…

Mind Blowing New Technologies Coming to AR/VR

Augmented reality and virtual reality are amazing new technologies by themselves, however, they are supported or complemented by a set of resources that would not otherwise make the virtual experience…

Articles Claiming that VR will bring the end of Civilization

Just as VR itself is inevitable, so are the articles predicting this technology will ruin civilization. The battle over whether virtual reality is safe for children (or adults for that…

List of Omnidirectional Treadmills Under Development

Omnidirectional treadmills, like the Omnideck (shown), open up new degrees of freedom for VR interactions. Photo: Omnifinity Maybe the term "omnidirectional treadmills" had you asking: what is it, exactly? Gradually…

Big List of all Worlds for Virtual Reality

High Fidelity's virtual world allows its users to play games as it was intended in real life. Photo: High Fidelity (Last updated July 23, 2016 with more VR worlds added.)…